#3, what work?

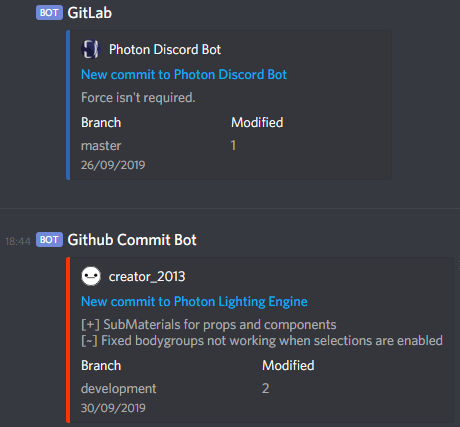

Photon Lighting Engine

Though only a recent addition to the Photon Core team, I’ve put in large amounts of effort,

from improving the use of VCS within the team,to building notification services.

This is combined with code provided to the Photon Lighting Engine core.

L² Plates

L² plates is a system written from the ground up in Garry’s Mod, using Lua as an embedded scripting language.

The plugin itself is sold on GModStore, with a more advanced build system having been built around it.

This system includes a set of docker containers, running a database and API service for the development tools,

and both GitLab and GitHub pipelines for building and deploying the addon versions,

alongside both the ingame system and development tools themselves.

Limelight Gaming

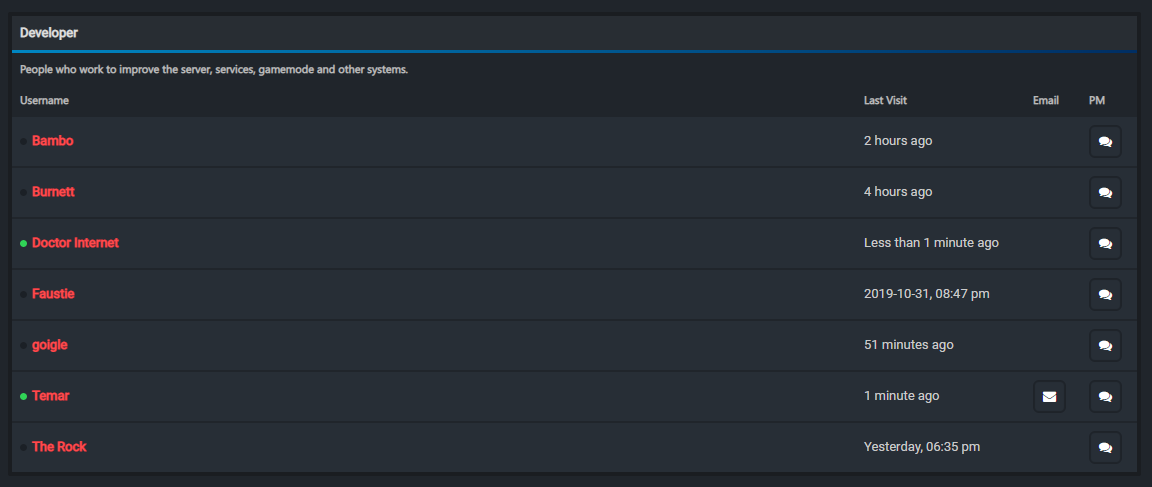

Development Lead

Within Limelight, as the development lead, a major part of my role has been improving team efficiency.

Our team is compromised of a small number of developers, most of who work with Limelight as a secondary or tertiary job.

With this has led to an improvement in the soft skills: People management and conflict resolution.

This has been of key importance here, if one person leaving drops staffing by 15%, each person is key.

Limelight Gaming

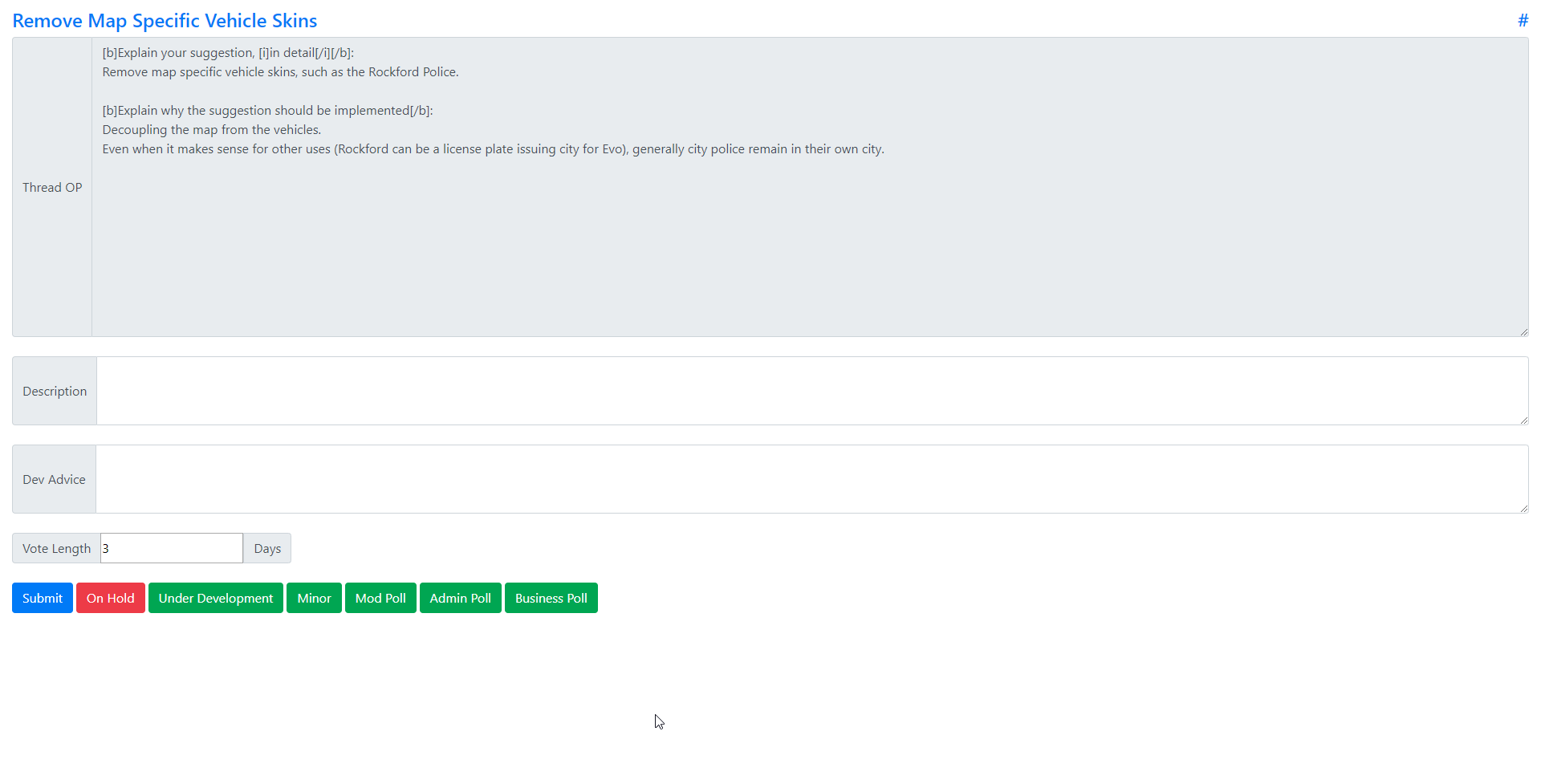

Workflow Optimisation

With our low staff levels, we’ve also have to develop custom tooling in order to improve our workflows.

For example, a system I developed was the suggestions management console,

a key system in improving our Suggestions Workflow.

This has led to a 50% decrease in the time spent processing internal suggestion reviews.

In addition, many parts of this system are also fully automated, from polls to requesting review to automatic closing.

Limelight Gaming

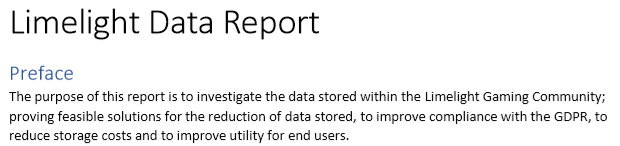

Data Protection

Whilst for many a boring subject, data protection is of key interest to me, hence why I put myself forward for for the data protection role.

Within the role, I worked on improving compliance with regulations (including the GDPR), implementing workflows for subject requests and

training other staff to improve data protection throughout the company.

Limelight Gaming

The Emergency Vehicle Update

One of the major updates I worked on for Limelight was the Emergency Vehicle Update.

This involved working with myself and three other texture artists to create new liveries and configurations for

over 80 vehicles, creating over 70 liveries and hundreds of configurations.

Despite some issues with one of the texture artists becoming unavailable, and some disagreements between the artists and other staff members,

the update only released a few weeks late (impressive considering multi-week delays that happened during development),

this not only allowed me to work on other development skills such as texture work, but also allowed development of the soft skills.

Glorified Studios

Deployment

One of my key pieces of work at Glorified Studios has been the development of

integrated build and deployment solutions.

For a varied range of engines, from Unity to Love2D,

my role has been to ensure that developers have the least amount of friction possible,

between them writing code and having those built changes deployed to consumers.

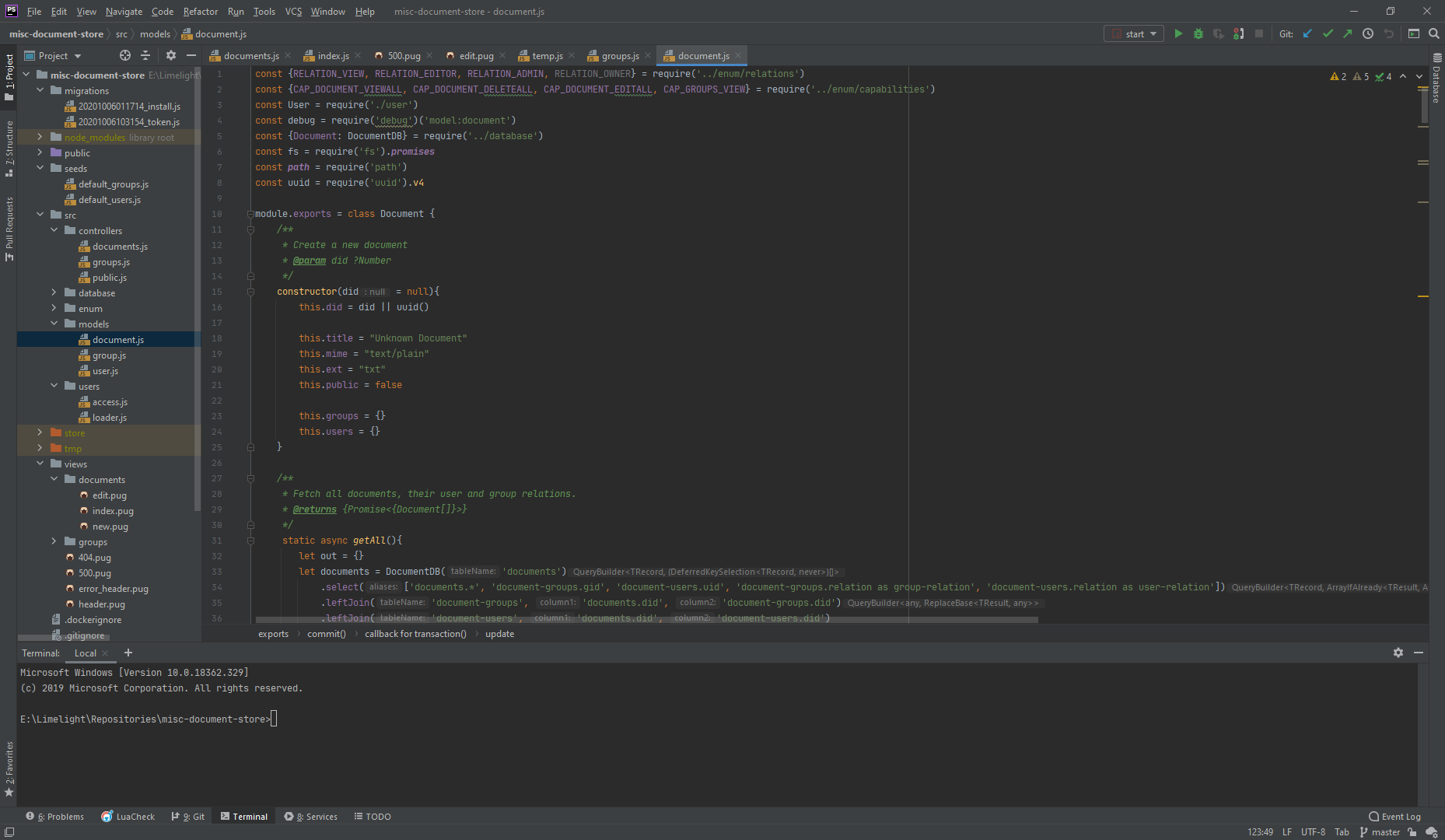

Limelight Gaming

Secure Document Store

One of my most used pieces of software to date is the secure document store.

This Node.JS application was written to securely store documents,

providing selective access to users based on various criteria, such as group filtering.

Access to individual documents is controlled by an ACL,

with groups also having individual permission nodes for audit and management purposes.

These are all passed through to a backend secured with current best practices,

such as the use of non-incremental IDs and using external authentication sources

to remove the requirement for storing passwords locally.

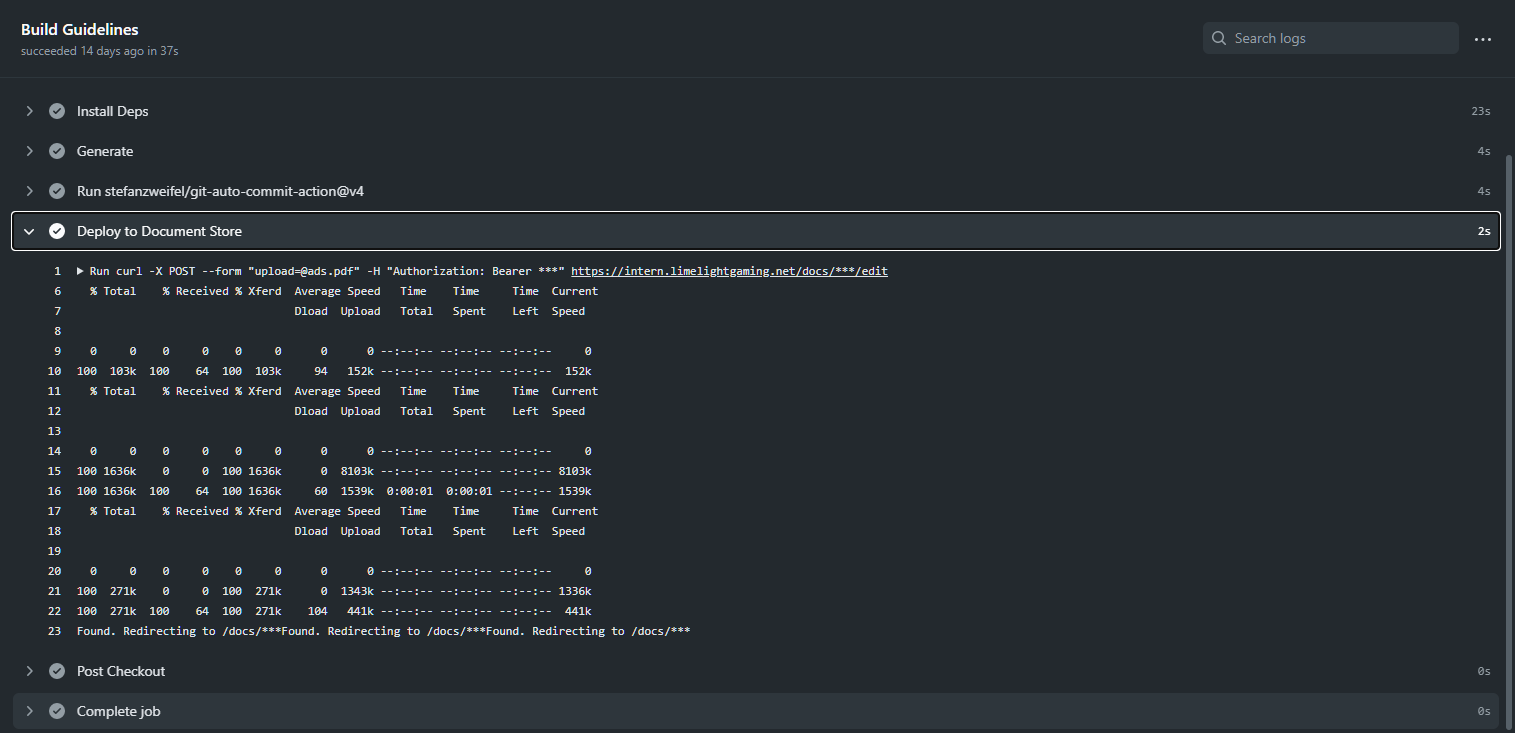

Furthermore, access was also built into the application for automatic updating of documents from external sources,

such as our staff guidelines, which are automatically built from asciidoc source files, with built files deployed to the store.